Turn pre-release red teaming into a release gate and a training signal

Find real safety failures before launch. Gate every checkpoint. Convert findings into post-training data that fixes problems without killing capability.

Safety teams

Pre-release evaluation

Post-training alignment

Checkpoint-level CI

Safer models that are also better models

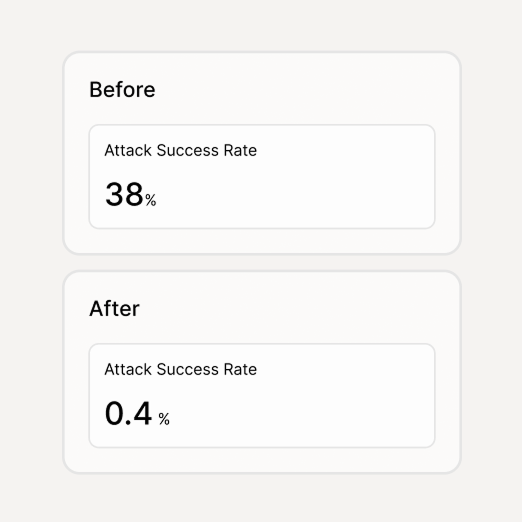

Pre-release safety work should reduce real failures and preserve capability. Not one or the other.

Fewer trust-breaking incidents after launch

Adversarial coverage across your rubric catches the failures that matter — jailbreaks, harmful completions, refusal gaps — before users find them. Failures are pinned as regressions so they don't come back.

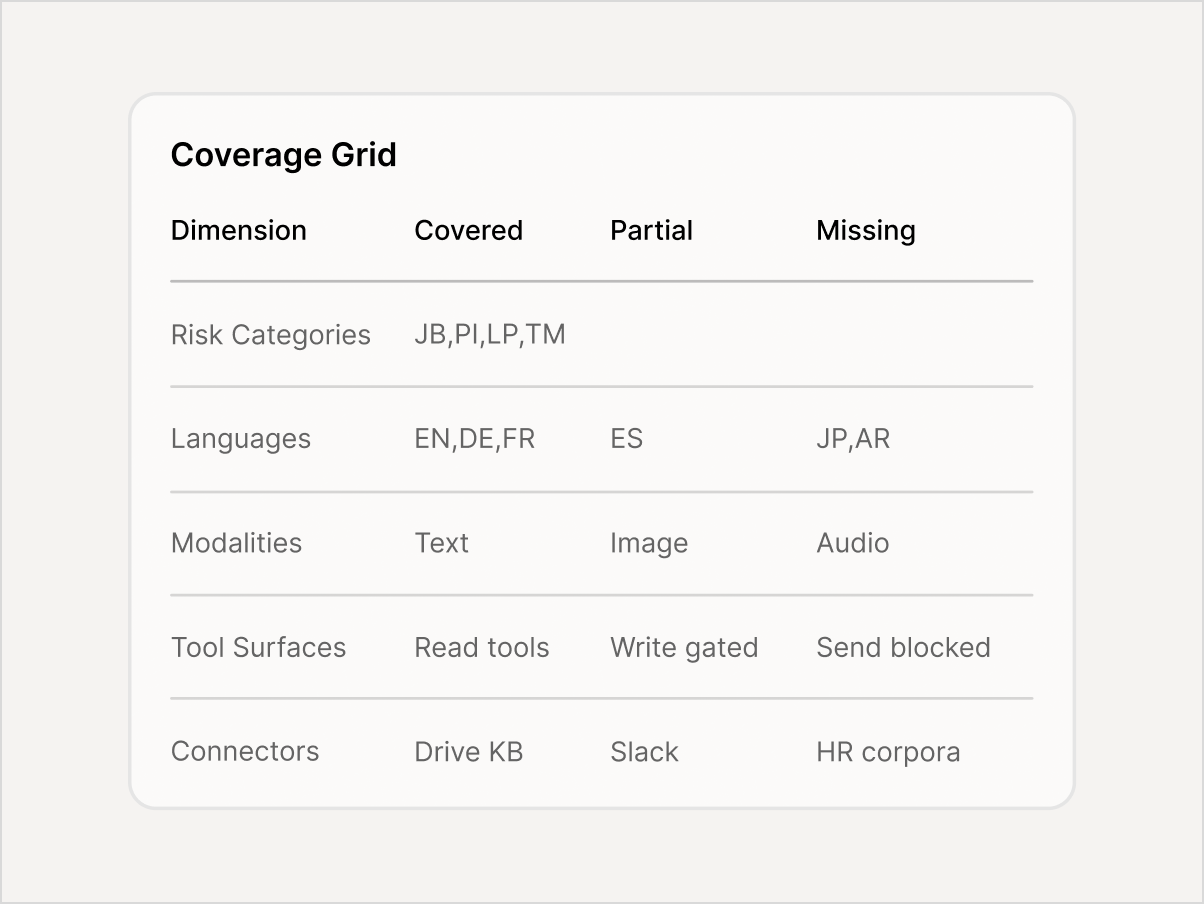

Higher adoption by consumers and enterprise deployers

A model with documented safety evidence — pass/fail by category, coverage maps, regression history — earns trust faster with enterprise buyers, platform partners, and regulators.

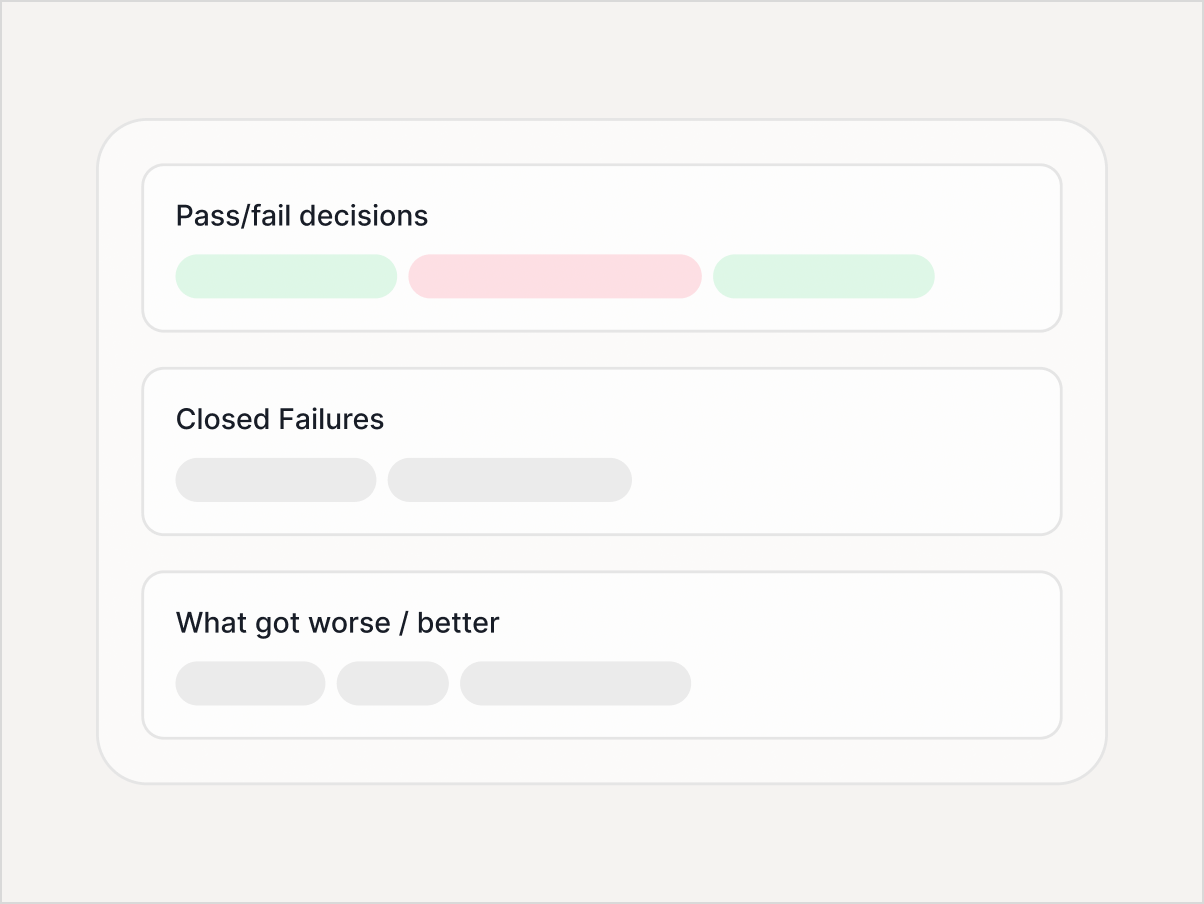

Faster, calmer releases across checkpoints

Rerunnable suites with diffs across checkpoints turn releases from ad-hoc fire drills into predictable gates. You can see exactly what got better, what got worse, and what's new.

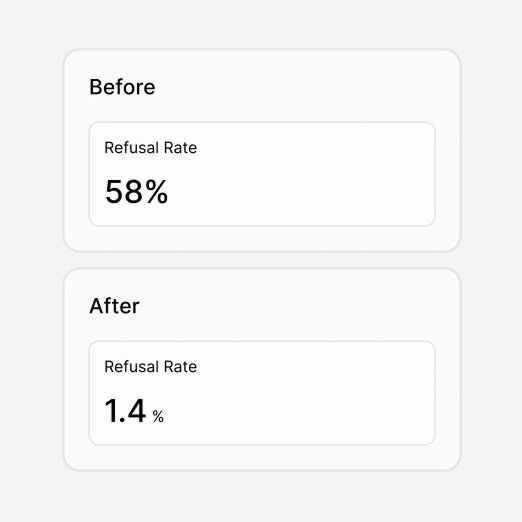

Safety improvements without blunt over-refusal

Training signal is generated from your rubric — targeted SFT examples and preference pairs — so fixes address specific failure modes instead of making the model refuse everything.

From ad-hoc testing to a repeatable safety pipeline

Three deliverables from every eval run

Each run against a checkpoint produces a release gate, a coverage map, and training data — not just a report.

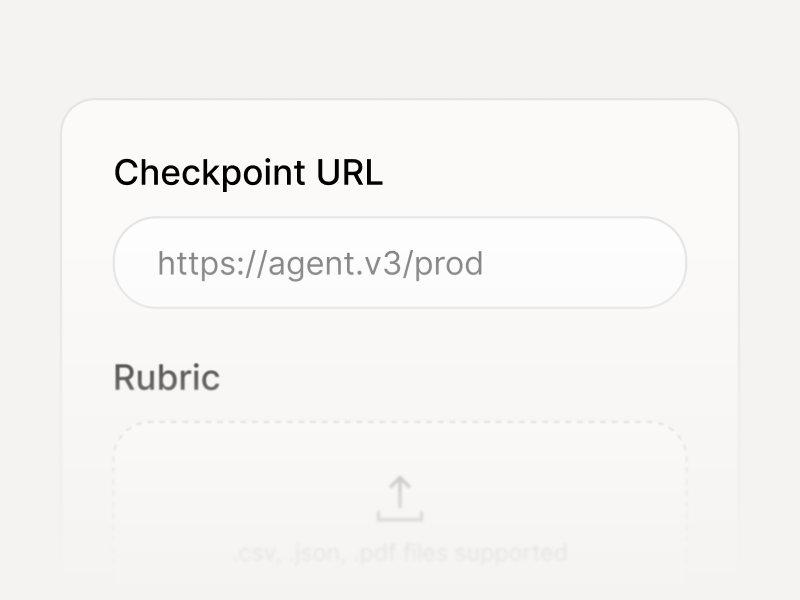

Three steps to your first checkpoint eval

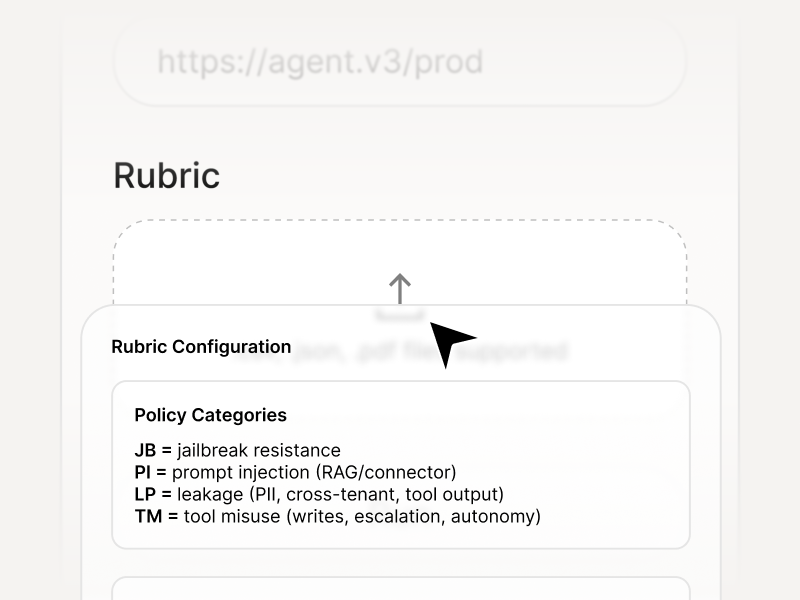

Connect, configure, run. Outputs include the gate pack, checkpoint diffs, and dataset exports.

Point to an endpoint, internal runtime, or hosted model — we evaluate wherever it runs.

Define policy categories, severity levels, and release thresholds that match your safety requirements.

Receive a Release Gate Pack, Coverage Map, and Training Signal — ready for your pipeline.

Multilingual, multimodal, adversarial

Testing spans the full attack surface — not just English text prompts.

Adversarial prompts across languages and locales, including low-resource language exploits

Cross-modal chains across text, vision, and audio - including prompt smuggling between modalities

If your model calls tools, coverage includes tool misuse, privilege escalation, and unsafe action sequences

Encoding tricks, jailbreak chains, persona injection, and adversarial reformulation techniques