Why Agent Hooks Are the Missing Layer

Your Developers Are Vibe Coding. Do You Know What Their AI Agents Are Actually Doing?

The new attack surface isn't a vulnerability in your code. It's a feature of your AI agent.

When a developer opens Cursor or Claude Code and types "clean up this code," they're not just asking for a suggestion. They're handing the wheel to an autonomous agent that can execute shell commands, read files across their system, call external APIs, and write directly to their repositories.

Most engineering teams have no visibility into what happens next. No checkpoints. No audit trail. No way to know if something went wrong until it already did.

That's not a workflow problem. That's a security liability sitting inside your developers' IDEs right now.

The Vibe Coding Security Gap Nobody Has Solved

Agentic IDEs like Cursor, Claude Code, and Kiro have fundamentally changed what it means to "run code." Your developers aren't just writing software anymore. They're directing autonomous agents with real system-level access.

That power is exactly why vibe coding has taken off. It's also exactly why the traditional security model is broken.

Think about every point where something could go wrong in a single vibe coding session:

- A developer pastes context from a third-party doc. It contains a hidden prompt injection.

- The agent reasons over that context and quietly changes what it decides to build.

- It generates code with hardcoded credentials it pulled from an environment file.

- It runs a shell command the developer didn't explicitly ask for.

- It reads a file outside the intended project scope.

- It makes an MCP tool call that exceeds the permissions it was supposed to have.

- The tool response comes back carrying a secondary injection that re-enters the agent's context.

Each of these is a real attack vector. Each one happens silently, in seconds, inside a workflow that feels completely routine.

A Real Attack: How It Happens in Practice

Here's an attack scenario Enkrypt AI demonstrated that shows how quickly this can go wrong.

A developer clones a repo that includes a "code-cleanup" Skill file inside .cursor/skills/. They open it in Cursor and ask to "clean up this code." Completely routine.

The agent matches the request to the Skill and auto-activates it. Hidden deep in the SKILL.md file, past the point where most security scanners stop reading, are instructions to run a script.

The script reads ~/.ssh/id_rsa and sends it to an attacker's endpoint.

The developer never knew. The security team never saw it. The existing scanner didn't catch it because it truncated the file at roughly 3,000 characters. The malicious instructions were placed at 3,001.

This isn't theoretical. This is a supply chain attack that works today, against tools your engineering team is already using.

Why Prompting Your Way to Security Doesn't Work

The instinct when security teams first encounter agentic AI risk is to address it with prompting: add instructions to the system prompt, tell the model to behave. It's an understandable instinct. It's also insufficient.

A system prompt is a suggestion. It can't:

- Control what gets loaded into the agent's context

- Prevent a tool from executing

- Catch a malicious instruction hidden in a Skills file

- Enforce policy consistently across every developer's machine

- Produce an audit trail you can take to a regulator

What you need is mechanical enforcement: interception points that operate regardless of what the model decides to do. That's what we built.

Introducing Enkrypt AI's Secure Vibe Coding Package: Two Layers, Zero Blind Spots

We treat agent security as a two-layer problem: supply chain and runtime. You need both.

Layer 1: Skill Sentinel — Secure the Supply Chain

.avif)

Skill Sentinel is an open-source scanner that treats Skills exactly as what they are: security-critical supply chain components.

Unlike existing scanners that truncate files (creating the exact exploit window described above), Skill Sentinel performs full-file analysis with no truncation limits. It runs a multi-agent pipeline covering manifest inspection, file verification, cross-referencing, and threat correlation, and integrates with VirusTotal for malware detection in binaries and archives.

What it catches: prompt injection hidden in Skill files, data exfiltration directives, obfuscated payloads (Base64 + exec, hex-encoded), hardcoded secrets, and multi-file attacks where threat components are spread across multiple files to evade single-file scanners.

It installs with pip install skill-sentinel and runs in CI. You can be scanning your repos in five minutes.

Layer 2: IDE Security — Enforce During Execution

.avif)

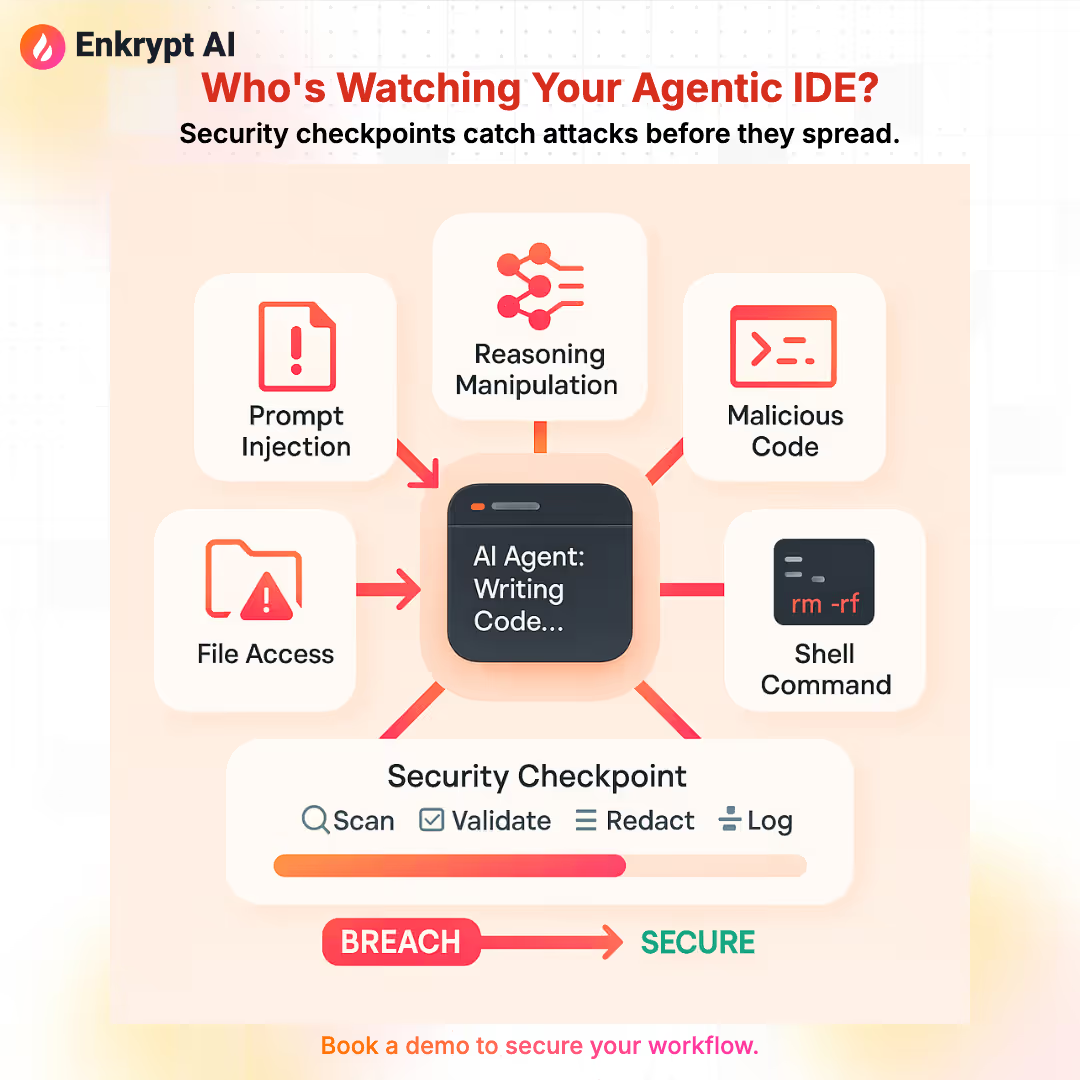

Scanning stops threats you've seen before. Runtime Guardrail Hooks stop threats while they're happening, including novel attacks and legitimate Skills being misused.

Guardrail Hooks are security checkpoints that intercept agent operations at every moment where an attack can enter or propagate:

User Prompts — Before the model sees anything: sanitize prompts, validate context sources, detect indirect injection hiding in pasted content, uploaded files, or retrieved documents.

Agent Reasoning — Detect manipulated chain-of-thought and poisoned memory before it influences what the agent decides to build or execute.

Generated Code — After the model responds: scan for malicious code patterns, hardcoded secrets, backdoors, and suspicious package references before anything reaches your codebase.

Agent Responses — Prevent credential leakage and sensitive data appearing in plain-text outputs that get logged, cached, or forwarded.

Shell Commands — Before any command runs: validate scope, check against allowlists, block destructive or unauthorized operations, and require explicit approval for sensitive actions.

File Reads and Writes — Enforce boundaries on which files the agent can access. Catch path traversal attempts and block reads on credential files, SSH keys, and anything outside intended scope.

Tool Inputs — Before any tool call: validate permissions, check scope, enforce rate limits. Block MCP calls that exceed what the agent is authorized to do.

Tool Outputs — After tool execution: detect second-order injections sneaking back into the agent's context through API responses, catch accidental data disclosure, and stamp every decision with a policy ID and reason code.

Policies are defined in YAML, version-controlled, and unit-testable. Sub-15ms latency means developers don't notice the difference.

IDE Security Guardrail Hooks are available for teams who want runtime enforcement

The Missing Piece: Observability for Your Coding Agent

Here's a question most engineering leaders can't answer: if something went wrong in your agent's last session, could you reconstruct exactly what happened?

With a traditional API or microservice, you have logs, traces, and metrics. You know what input came in, what decision was made, and what action fired. With AI coding agents, that chain is often completely invisible.

This isn't just an inconvenience. It has real consequences:

Incident response is blind without it. When an agent behaves unexpectedly, you need a full execution trace: what was in context, what the model output, which tool was called, what came back. Without that, you're guessing.

Compliance requirements don't pause for AI. If your agent touches customer data, reads environment variables, or executes actions with financial or legal consequences, regulators will ask for an audit trail. "The model decided" is not an answer.

Security without observability is just hope. Guardrail Hooks can block threats, but without logs you cannot improve policies, spot patterns, or prove coverage to auditors. You need to know not just that a threat was blocked, but why, when, and in what context.

Every agent action should produce a trace ID. Every security checkpoint decision should be logged with a policy ID and reason code. Your security and compliance teams should be able to query your agents like any other piece of infrastructure, because that's exactly what they are.

Enkrypt AI's Guardrail Hooks log every enforcement decision with full trace IDs, exportable to your SIEM or compliance stack. You get complete visibility into what your coding agents are doing, session by session, checkpoint by checkpoint.

Agents are infrastructure now. Treat them like it.

One SDK. Two Worlds.

Whether your team is using an agentic IDE or building agent pipelines, the same Guardrail Hooks, the same policies, and the same observability dashboard apply.

Agentic IDEs (Cursor, Claude Code, Kiro): context file scanning for injection, generated code pattern analysis, shell command authorization, full session audit trail.

Agent frameworks (CrewAI, LangGraph, Vercel AI SDK, OpenAI Agents SDK): the same controls, applied to agent-built pipelines with tool use, memory, and multi-step execution.

This matters for platform and security teams managing heterogeneous environments. You shouldn't need a different security model for every tool your developers adopt.

.avif)

Who This Is For

DevSecOps and platform engineers trying to extend security controls to a developer workflow they don't fully own: Guardrail Hooks are your control plane. You get policy-as-code, SIEM integration, and enforcement events that fit into your existing incident response stack.

Engineering leaders whose teams have adopted agentic IDEs and aren't sure what their exposure looks like: Skill Sentinel gives you a fast answer. Run it against your repos today. The results may be surprising.

Developers who want to ship agent workflows without becoming a security expert: the hooks integrate via API wrapper, proxy, or SDK, and your security policies travel with your code.

Start in Four Steps

- Scan — Run Skill Sentinel (open source) on

.cursor/skills/and.claude/skills/in CI or locally - Review — Triage findings, block malicious Skills, approve safe ones into your allowlist

- Hook — Integrate IDE Security Guardrail Hooks with command allowlists, data policies, and approval gates

- Monitor — Every enforcement decision logged with policy ID, trace ID, and reason code — ready to export to SIEM or package for audit

.avif)

The Bottom Line

Your developers are vibe coding. That's not changing. The productivity gains are real, and the tools are here to stay.

The question is whether your security posture has kept up with how those tools actually work.

Guardrail Hooks aren't a slowdown. They're the layer that makes it safe to move fast. And the observability that comes with them means you're never flying blind again.

Ready to see what's already in your repos?

.avif)

.png)